On Monday, Anthropic and OpenAI both announced billion-dollar enterprise ventures on the same day. Anthropic partnered with Blackstone, Goldman Sachs, and Hellman and Friedman to launch a $1.5 billion firm aimed at embedding AI directly inside mid-size companies. OpenAI finalized a $10 billion venture with TPG, Brookfield, Advent, and Bain Capital. Zero investor overlap between the two.

Goldman Sachs’s global head of asset and wealth management said the goal is “democratizing access” to AI-enabled engineers. Anthropic described the model plainly: “A typical engagement starts with a small team working closely with the customer to understand where Claude can have the biggest impact. From there, the company’s engineers — alongside Anthropic Applied AI staff — develop Claude-powered systems tailored to each organization’s operations.”

The financial world just split into two camps. And they are both betting on the same thesis.

If you have been around technology long enough, you have seen this before.

This Is the Web Moment All Over Again

In the mid-1990s, the internet existed. Technically sophisticated people understood what it was and what it could do. But most businesses had no idea how to get there, who to trust, what it would cost, or whether it was even worth doing.

Then the money moved. Big firms started taking positions. Consulting practices stood up overnight. Every company suddenly had a mandate to “get online” whether or not anyone knew what that meant. The pressure came from the top down — your competitors are doing this, your board is asking about it, your customers expect it.

What followed was a gold rush. Some companies built real things that lasted. Most hired the wrong people, built the wrong things, and spent the next five years trying to figure out why their expensive website was not growing revenue. The tools were real. The opportunity was real. The execution was a mess.

We are in that moment right now with AI. And the Anthropic and OpenAI announcements are the starting gun.

The Pressure Is About to Become Operational

Here is what most coverage is missing about these deals.

Blackstone and Goldman alone control hundreds of portfolio companies across every sector. When the private equity firm that owns your company says “your peers are already running this,” adoption is no longer a strategic choice you can defer to next quarter. It becomes operational gravity. You either figure it out or you explain why you did not.

This is the ERP playbook. SAP and Oracle did not take over enterprise operations because every company independently decided their software was the best option. They took over because the private equity and consulting infrastructure around them made adoption the path of least resistance. AI is following the same playbook, but faster and with higher stakes because the underlying technology actually transforms operations rather than just digitizing existing processes.

The companies that move now will have operating layers trained on their specific data, their specific workflows, their specific edge cases. Every day those systems run, the gap between them and the companies that waited gets wider. This is not a product you can buy later and catch up. It is infrastructure that compounds.

What Nobody Is Talking About Honestly

The announcements are exciting. The reality of implementation is significantly harder than any press release suggests. And this is where the early web parallel gets uncomfortably accurate.

Nobody knows exactly how to do this right.

There is genuine expertise in building AI systems. There are also thousands of consultants, agencies, and vendors who are going to tell every company they talk to that they have the answer. Most of them have been doing this for eighteen months. They have seen a handful of implementations. They are learning in public on your dime.

The companies that got the most out of the early web were not the ones who hired the biggest agency or moved the fastest. They were the ones who were honest about what they did not know, started with the problem that actually mattered, and built something real before trying to build everything.

Governance is unsolved.

When an AI system is making operational decisions inside your business — routing workflows, flagging exceptions, generating documents, executing approvals — who is responsible when it gets something wrong? What are the audit trails? What are the guardrails that prevent a well-intentioned automation from creating a compliance problem, a security exposure, or an operational failure?

These questions do not have clean answers yet. The governance frameworks are still being written. Any vendor that tells you this is a solved problem is selling you something.

The implementation gap is real.

There is a significant difference between a working demo and a production system that holds up under real operating conditions. Most AI implementations fail not because the technology does not work but because the implementation did not account for the actual complexity of the operation — the edge cases, the exceptions, the processes that only work because the right person remembers to do the thing at the right time.

The ERP era is instructive here too. The software was genuinely powerful. The failure rate on implementations was staggering because the gap between what the system could do in theory and what the operation actually needed in practice was wider than anyone admitted upfront.

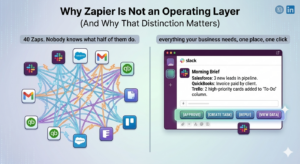

More AI can mean more burden, not less.

This is the one nobody talks about. Done poorly, an AI operating layer does not reduce coordination overhead. It adds a new system to maintain, new exceptions to manage, new failure modes to monitor, and new training requirements for the team. The people who were previously doing things manually are now managing an automation that sometimes works and sometimes does not, with no clear protocol for when to intervene.

The goal is not to automate processes. The goal is to eliminate the coordination burden so the team can focus on the work that actually matters. Those are different problems and they require different approaches.

What the Announcement Actually Validates

Despite all of this, the Anthropic and Goldman news matters and should not be dismissed.

It validates the thesis that the value in enterprise AI is not in the model. The base models are converging. Claude, GPT-4o, Gemini — they are all capable of roughly equivalent things for most business applications. The differentiator is not which model you use. It is the operating layer built around it. The proprietary data it is trained on. The workflows it is embedded in. The institutional knowledge that gets encoded into the system over time.

Anthropic said this plainly. Goldman said it plainly. The thesis is: embed engineers, build on the specific operation, create infrastructure that cannot be replicated by a competitor who shows up later. That is not a technology thesis. It is an architecture thesis. And it is right.

It also validates the forward-deployment model. The firms involved are not building SaaS products you subscribe to. They are embedding teams inside companies to build custom systems. That is the Palantir model applied to AI. It is more expensive, slower to scale, and significantly more effective than any plug-and-play solution because it accounts for the actual complexity of the operation rather than assuming every company’s workflows fit the same template.

The Question Every Business Should Be Asking Right Now

Not “should we do AI.” That question is effectively answered.

Not “which model should we use.” That question does not matter as much as it did a year ago.

The question is: what is the one operational problem in our business that, if we solved it in the next 90 days, would move everything else?

That is where implementation succeeds. Not with a sweeping digital transformation initiative. Not with a platform purchase that takes eighteen months to deploy. With a specific, scoped, well-understood problem that an operating layer can actually solve, built by people who understand both the technology and the operation.

The companies that got the most out of the early web did not try to do everything at once. They identified one thing their business genuinely needed to do better, built it, proved it worked, and expanded from there. The infrastructure grew with them.

That is the right model for AI implementation too. And it is the model the Anthropic and Goldman venture is describing, even if the scale of the announcement obscures how unglamorous the actual work is.

What Happens Next

The next eighteen months will look a lot like 1998 and 1999 looked for the web. A lot of money will move. A lot of implementations will start. A significant number will fail in ways that are predictable in retrospect but not obvious in the moment.

The companies that come out ahead will be the ones that started with the right problem, worked with people who were honest about what they did not know, built real things that held up in production, and had the governance in place to manage what they built responsibly.

The companies that struggle will be the ones that responded to board pressure with a vendor selection rather than a strategy, hired the loudest voice in the room, and tried to automate everything at once before they understood what their operation actually needed.

The technology is real. The opportunity is real. The gap between a good implementation and a bad one is wider than most people realize right now.

May 2026 may well be the moment enterprise AI stopped being a question and became an imperative. That is exactly what people said about the web in 1999. Some of them were right for the wrong reasons. The ones who got it right were the ones who stayed honest about what they were actually building and why.

Werx Studio builds custom AI operating layers for growing businesses. If you want to talk about what the right first problem looks like for your operation, book a walkthrough or reach out directly.